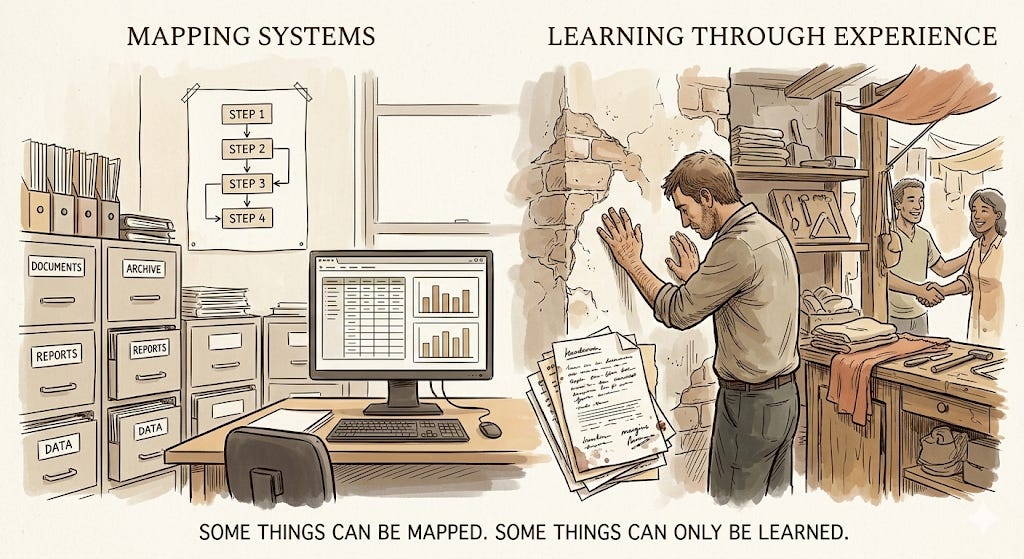

The Codified and the Foggy

Agents work brilliantly where knowledge is already written down. Most of the interesting work isn't.

There’s a conversation happening right now about how most people are stuck thinking of AI as chatbots, while the real opportunity is agents - long-running, autonomous systems that go off and do work while you sleep.

I think that framing is incomplete in a way that matters.

Coding agents genuinely work. I use them, I’ve seen the time savings. But code is honest. You run it, it either does the thing or it doesn’t. The feedback loop is immediate and brutal. That’s not a property of AI - it’s a property of software. And it’s actually pretty rare in the world.

AI is going to move fast in domains where knowledge is already codified. Tax, law, accounting, compliance, medical diagnosis - these fields have rules, precedents, structured data. A lot of what people spent years learning can be expressed in a system. AI is already moving through that quickly.

But a huge amount of work lives somewhere else entirely. In people’s heads. In relationships. In the physical world. In the gap between what’s written down and what’s actually true.

A shop owner who knows which supplier will quietly make good on a bad shipment without being asked. A manager who understands why someone is underperforming without it ever being said out loud. A contractor who looks at a wall and knows something is off before opening it up.

That knowledge doesn’t live in a document. It doesn’t have a feedback loop you can automate.

This is where “agents will transform all knowledge work” gets shaky. It conflates two very different kinds of work - the kind that can be written down and the kind that can’t. Saying agents will handle both equally is like watching a self-driving car nail a test track and concluding it’s ready for a snowstorm in a city it’s never seen.

The places where AI will struggle longest are exactly the places that look most human - foggy, relational, physical, judgment-heavy work where even experienced people disagree and consequences only surface months later.

The internet really did change everything. But the people in 1999 who were most confident they understood how, and how fast, were mostly wrong about the specifics - even when they were right about the direction.

The most useful question isn’t “are you building agents or chatbots?” It’s: is the knowledge here codified enough that a system can act on it reliably - and will the person responsible for the outcome trust it enough to let it?

That’s where the real work is.

🙏

Be kind,

Manuel